Tailscale: Kubernetes Operator

The true story of how I got my code contribution accepted into a popular open-source VPN mesh networking codebase.

Tailscale is a well-funded networking company. In April 2025 they announced their Series C round: $160 million USD, led by Accel with participation from CRV, Insight Partners, Heavybit, and Uncork Capital. Their investor roster includes George Kurtz, CEO of CrowdStrike, and Anthony Casalena, CEO of Squarespace. They have a sizable engineering team of well-compensated professionals.

Even so, sometimes it takes a professional operator using a product in-the-field to find what the corporate dev/test team has missed.

What is an Exit Node?

By default, Tailscale operates as an overlay network – it connects your Tailscale devices

to each other but leaves your regular outbound internet traffic untouched.

An exit node changes that. When a device is configured as an exit node, it advertises default routes

(0.0.0.0/0 and ::/0), causing all other devices in your tailnet to send their

complete internet traffic through it – functioning like a traditional VPN.

A subnet router, by contrast, only advertises specific CIDRs (e.g. 10.8.0.0/16),

routing traffic destined for those subnets through the node while leaving all other

internet traffic alone.

These are two distinct routing roles. The Tailscale Kubernetes Operator exposes both

via the Connector CRD – and the interaction between them on a single resource is

exactly where the issue below surfaced.

More detail: tailscale.com/docs/features/exit-nodes

The Finding

While deploying the Tailscale Kubernetes Operator on GKE

I encountered unexpected runtime failures when using a

single Connector resource with bothexitNode: true and subnetRouter configured together.

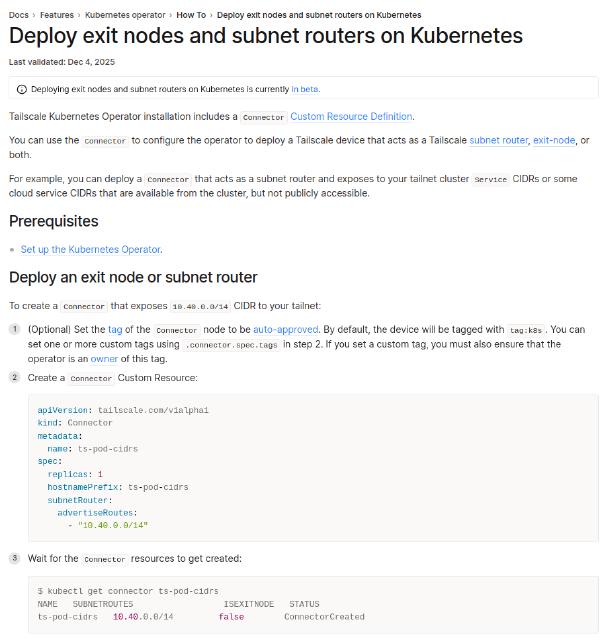

The official documentation page – “Deploy exit nodes and subnet routers on Kubernetes” – implied both items could be configured on the same Connector…

Notice in the screenshot below,

the page title says “Deploy exit nodes and subnet routers” – and the intro text

explicitly states you can configure a Connector to act as “a subnet router, exit-node,

or both.”

The both is where the breakdown in their published documentation is.

After grepping through the source code I established why the combination fails in practice.

cmd/k8s-operator/connector.go – the reconciler sets isExitNode

and routes independently on the same struct, with no mutual-exclusivity

guard between them:

sts := &tailscaleSTSConfig{

Connector: &connector{

isExitNode: cn.Spec.ExitNode, // set from exitNode: true

},

}

if cn.Spec.SubnetRouter != nil && len(cn.Spec.SubnetRouter.AdvertiseRoutes) > 0 {

sts.Connector.routes = cn.Spec.SubnetRouter.AdvertiseRoutes.Stringify()

}

Both fields are written without checking whether the other is already set. The struct accepts both, so the code compiles and deploys without error – the failure only surfaces at runtime.

net/netutil/routes.go – CalcAdvertiseRoutes merges subnet routes

and exit node default routes into the same map:

func CalcAdvertiseRoutes(advertiseRoutes string, advertiseDefaultRoute bool) ([]netip.Prefix, error) {

routeMap := map[netip.Prefix]bool{}

if advertiseRoutes != "" {

// ... parses and adds each subnet CIDR to routeMap

}

if advertiseDefaultRoute {

routeMap[tsaddr.AllIPv4()] = true // adds 0.0.0.0/0

routeMap[tsaddr.AllIPv6()] = true // adds ::/0

}

// returns combined slice of all routes

}

When both are active on the same Connector, 0.0.0.0/0 is added to the

route map alongside the specific subnet CIDRs. A default route that matches

all traffic subsumes the specific routes, creating routing conflicts and

undefined behaviour on the node.

The CRD validation rules do not enforce mutual exclusivity – only

appConnector is blocked from combining with the other two modes. The code

is permissive, but the runtime result is broken. Separate Connector

resources are required for each role.

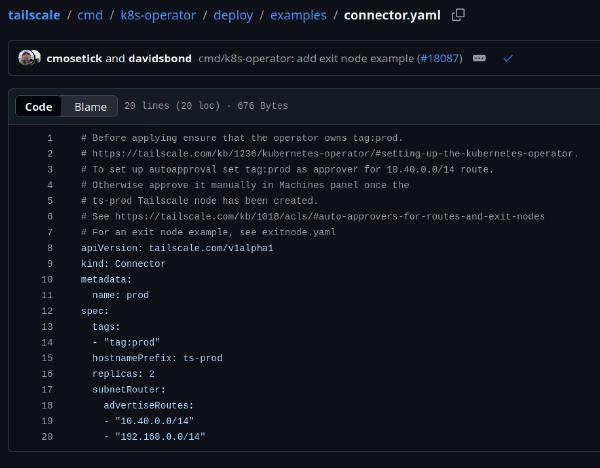

The existing connector.yaml example in the Tailscale repository had

exitNode: true set alongside subnet routes – precisely the combination

that causes problems. There was no dedicated exit node example to help someone

like me at all.

*To be fair, at my time of discovery in October 2025, they did write these are considered alpha/beta features on their current kubernetes offering. Which is actually part of the appeal for guys like me to test them out.

Contribution

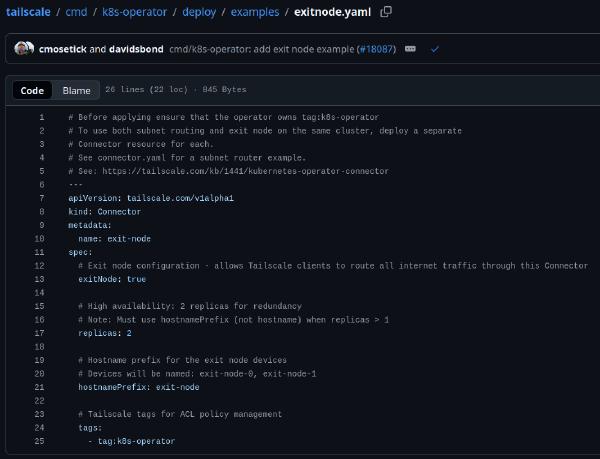

I filed issue #18086 documenting the problem, then submitted pull request #18087 with two changes:

New file:

cmd/k8s-operator/deploy/examples/exitnode.yaml– a dedicated, correctly documented exit node Connector example.Modified file:

cmd/k8s-operator/deploy/examples/connector.yaml– removed theexitNode: truefield

(keeping it as a clean subnet router example) and added a cross-reference to the newexitnode.yaml.

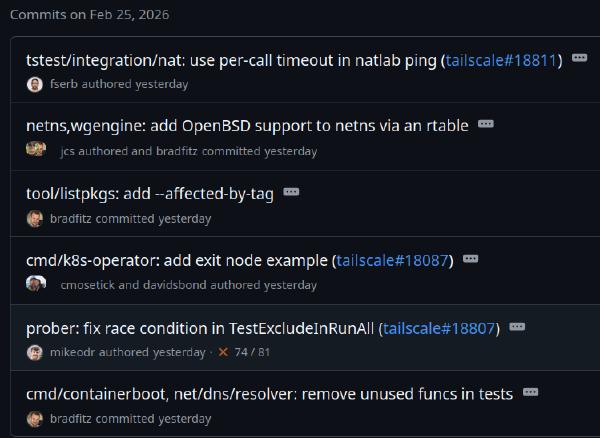

The PR was merged on 2026-02-25.

Both files are now part of the upstream Tailscale codebase:

This is a practical example of why field experience matters. The gap was not caught during Tailscale teams development or internal review – it surfaced through real-world use on a production Kubernetes clusters.

Reference

PR #18087 – Merged into tailscale:main

The new exitnode.yaml – now live in the official git repo

The updated connector.yaml – exitNode field removed, cross-reference added

Commit history on Feb 25, 2026 – the PR lands alongside other Tailscale work

Thank You

Thanks to David Bond from Tailscale Inc. and the other Tailscale Inc. devs for helping me get my documentation changes into the tailscale codebase.